BridgeData V2: A Dataset for Robot Learning at Scale

BridgeData V2 is a large and diverse dataset of robotic manipulation behaviors designed to facilitate research in scalable robot learning. The dataset is compatible with open-vocabulary, multi-task learning methods conditioned on goal images or natural language instructions. In our experiments, we find that skills learned from the data generalize to novel objects and environments, as well as across institutions.

Dataset Composition

To support broad generalization, we collected data for a wide range of tasks in many environments with variation in objects, camera pose, and workspace positioning. BridgeData V2 contains 53,896 trajectories demonstrating 13 skills across 24 environments. Each trajectory is labeled with a natural langauge instruction corresponding to the task the robot is performing.

View a Random Trajectory

Use the "Sample" button to view a random trajectory from the dataset! We show the intial and final states of the trajectory, as well as the corresponding natural language annotation.

Evaluations of Offline Learning Methods

We evaluated several state-of-the-art offline learning methods using the dataset. We first evaluated on tasks that are seen in the training data. Even though these tasks are seen in training, the methods must still generalize to novel object positions, distractor objects, and lighting. Next, we evaluated on tasks that require generalizing skills in the data to novel objects and environments. Below we show videos for some of the seen and unseen tasks evaluated in the paper. All videos are shown at 2x speed.

Seen Goal-Conditioned Tasks

Unseen Goal-Conditioned Tasks

Seen Language-Conditioned Tasks

Unseen Language-Conditioned Tasks

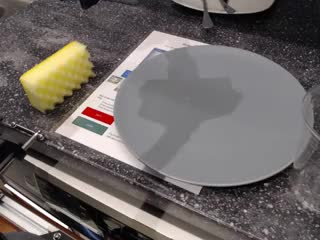

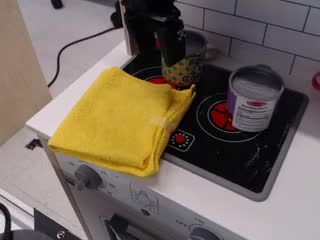

System Setup

All the data was collected on a WidowX 250 6DOF robot arm. We collect demonstrations by teleoperating the robot with a VR controller. The control frequency is 5 Hz and the average trajectory length is 38 timesteps. For sensing, we use an RGBD camera that is fixed in an over-the-shoulder view, and two RGB cameras with poses that are randomized during data collection. The images are saved at a 640x480 resolution.